Introducing Elastio for Amazon FSx for NetApp ONTAP Filers

AI-Ready & Ransomware-Proof FSx for NetApp ONTAP

Amazon FSx for NetApp ONTAP (FSxN) has become the gold standard for high-performance cloud storage, combining the agility of AWS with the data management power of NetApp.

Today, this infrastructure is more critical than ever. As unstructured data volumes explode and enterprises race to feed Generative AI models, FSxN has evolved into the engine room for innovation. It holds the massive datasets that fuel your AI insights and drive business logic.

You cannot build trusted AI on unverified data

FSxN delivers the trusted, high-performance platform your enterprise relies on. But true trust requires more than uptime—it requires integrity. As enterprise architectures evolve, so do the threats targeting them. The sheer scale of unstructured data creates a massive blind spot where ransomware can hide, silently corrupting data over weeks. If the data residing on your trusted storage is compromised, your AI models are being trained on poisoned assets.

The Imperative: Verified Data for Trusted AI

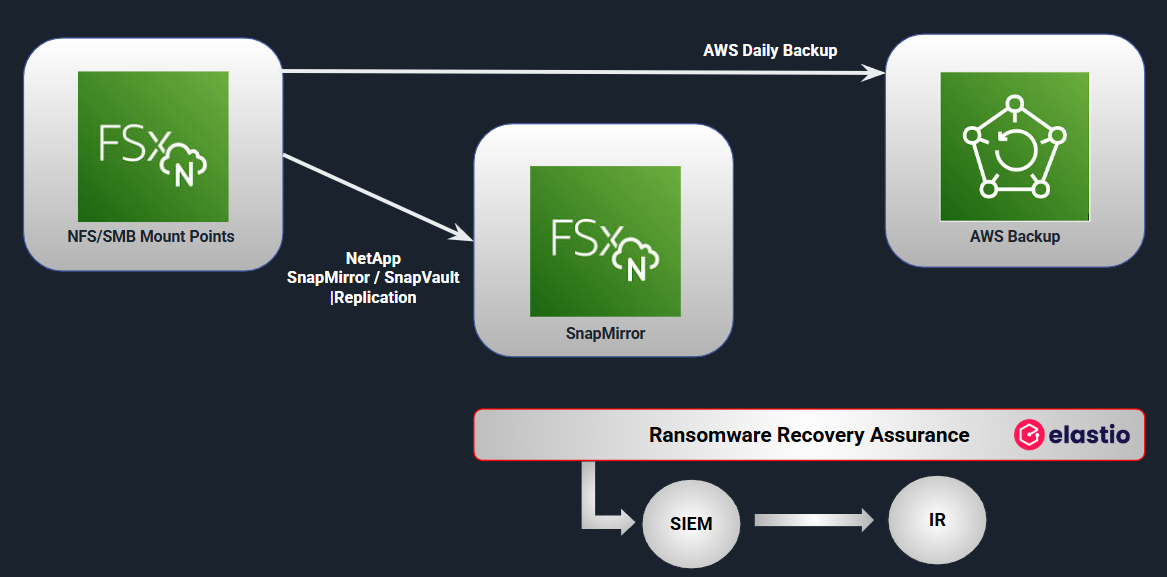

Today, Elastio is introducing comprehensive Ransomware Recovery Assurance for Amazon FSx for NetApp ONTAP. We now provide a layered defense that validates the integrity of the data within your primary volumes, SnapMirror replicas, and AWS Backups, ensuring that your storage is not just available, but provably clean.

The Three-Tier Defense for FSxN

To understand where Elastio fits, we must look at the modern FSxN protection architecture. A resilient implementation typically relies on three layers :

- Primary Filer: Your active, high-performance workload.

- SnapMirror Replica: A near-real-time, read-only copy used for disaster recovery with low RPOs (e.g., 5 minutes).

- AWS Backup: A daily recovery point for long-term retention and compliance.

Until now, verified recoverability across these layers was a blind spot. Elastio eliminates that uncertainty by integrating with the entire chain to validate data integrity before a crisis occurs.

The Risk of Silent Corruption

Ransomware attacks frequently begin subtly, bypassing perimeter defenses and modifying data blocks without triggering immediate alerts. If these corrupted blocks are replicated to your SnapMirror destination or archived into your AWS Backup vault, you aren't preserving your business—you are preserving the attack.

Just having backups is not enough. To ensure resilience, you must answer three questions about your recovery points :

- Are they safe?

- Are they intact?

- Are they recoverable?

Introducing Elastio Recovery Assurance for FSxN

Elastio delivers agentless, automated verification for FSxN environments. Our platform connects to your infrastructure to perform deep-file inspection, providing :

- Behavioral Ransomware Detection: We identify encryption patterns that signature-based tools miss, including slow-rolling and obfuscated encryption.

- Insider Threat Detection: We detect malicious tampering or unauthorized encryption driven by compromised credentials.

- Corruption Validation: We identify unexpected data corruption that could render a backup unusable during a restore.

This coverage spans the entire lifecycle. Elastio scans your SnapMirror replicas for immediate RPO validation and utilizes AWS Restore Testing to validate your AWS Backups without rehydrating production data.

Complementing NetApp’s Native Defenses

Elastio is designed to work with your existing security stack, not replace it.

NetApp’s native Autonomous Ransomware Protection (ARP) is an excellent first line of defense, monitoring your production environment for suspicious activity in real-time.

Elastio complements ARP by operating beyond the production path. We focus on the recovery chain, performing deep-dive analysis on your backups and replicas. If ARP flags a potential threat in production, Elastio allows you to instantly identify which historical recovery point is clean, verifiable, and safe to restore .

Compliance: From "Prevention" to "Proof"

Regulatory pressure is shifting. Frameworks like DORA, NYDFS, HIPAA, and PCI-DSS are moving away from simple backup retention mandates toward requirements for demonstrable recovery integrity.

Auditors and cyber insurers no longer accept "we have backups" as an answer. They require proof that those backups can be restored. Elastio automates this reporting, providing a validated inventory of clean snapshots that satisfies the most stringent compliance and risk requirements.

Recommended Architecture for Provable Recovery

To achieve maximum resilience with FSxN, we recommend the following layered approach :

- Replicate: Use SnapMirror to maintain a secondary copy with a 5-minute RPO.

- Retain: Use AWS Backup to enforce retention policies.

- Validate:

- Run Elastio Hourly Scans on SnapMirror replicas to catch infection early.

- Run Elastio Restore Tests monthly on AWS Backups to verify your vault.

Conclusion

In the current threat landscape, ransomware is not a matter of if, but when.

Your data is only protected if it can be recovered. With Elastio’s new support for Amazon FSx for NetApp ONTAP, you can move beyond checking a backup box and gain true recovery assurance. In just minutes per TB, you will know if your data is clean or compromised, and be ready to recover with confidence.

3 Key Takeaways

- AI Integrity Requires Clean Data As FSxN drives generative AI and unstructured data growth, silent corruption becomes a critical risk. Elastio prevents "poisoned" datasets by detecting corruption inside the storage layer.

- End-to-End Validation Elastio secures the entire FSxN lifecycle, providing deep inspection and clean recovery verification for primary volumes, SnapMirror replicas, and AWS Backups.

- The "Production and Recovery" Defense Elastio operates outside the production path to complement NetApp’s Autonomous Ransomware Protection (ARP), validating snapshots to ensure you always have a safe place to restore from.

Can you prove your recovery points are clean?

Your board will ask if you can recover clean. This checklist lets you answer with evidence.