Amazon Managed Streaming for Apache Kafka (MSK) is a popular distributed event store and stream-processing platform many companies use to process data.

To ensure fault tolerance, replication, and mirroring are used, but these technologies don’t protect against data loss caused by processing errors or application failures. These cases may severely impact a company’s operation and result in unrecoverable data.

What makes streaming data difficult is the ability to persist data along the processing path (e.g., checkpoints) and the ability to rewind the processing of past data. You hope these checkpoints are never needed, but a company won’t know if they are needed until a failure happens.

A few examples where data loss may occur:

Leader-follower:

When high replication is put in place in our messaging queue platform, the leader broker may fail during the process to make the followers consistent with the leader.

No replication:

On the other side of the spectrum, companies may have replication disabled completely. A failure of the master broker will result in data loss.

All brokers failed:

In this extreme situation or with poor replication design, all brokers may fail at the same time (e.g. a zone is down and all the replicas are in the same zone).

Deletion of a topic:

A topic may be accidentally deleted and that may prevent the producer from sending the data to the queue for consumption.

Incorrect transformation/processing:

Developers may run incorrect code to transform data during consumption. This may give wrong results or drop important information.

Elastio and its data protection capabilities can help with solving all the pain points mentioned above to provide Kafka business continuity and protect from data loss and downtime.

How we use Elastio to protect our customers from kafka outages and application failures

At Elastio, the events stored in Amazon MSK are crucial for our platform. Kafka directly influences the reliability and data consistency of our customer Tenants. Because of this, we need to protect these events to isolate our customers from Kafka outages and downtime.

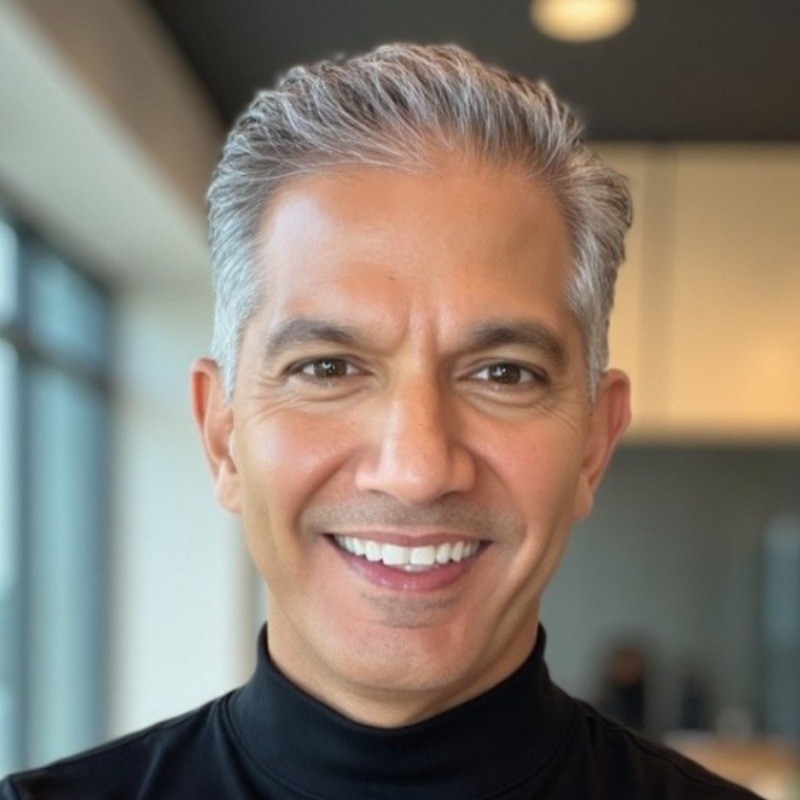

The Elastio Tenant is built on the AWS cloud and uses Elastic Kubernetes Services (EKS), RDS, ElastiCache, and MSK.

The Tenant is built on a microservice architecture, and each of our services is bounded by the domain context. Our services communicate synchronously, with internal API calls and asynchronously, passing messages over the AWS Managed Streaming for Apache Kafka (MSK). The Tenant also synchronously communicates with every customer’s Cloud Connector by calling a Cloud Connector Lambda function and asynchronously polling and putting SQS messages from/into a Cloud Connector.

Here is a diagram of how the Tenant works:

As a result, we generate a lot of external and internal asynchronous communication that relies on Kafka. All event messages polled from the customers’ Cloud Connectors are sent to a specific Kafka topic. Event messages from Cloud Connectors can include more detailed information, such as backup metadata, and security report details. The Tenant processes, stores, and visualizes that data for our customers. Our team investigated the market to find a solution to backing up Kafka and was surprised that no product or service is available for Amazon MSK.

Introducing Elastio. The Elastio CLI offers advanced backup options. The stream backup capability became the key to solving the Kafka backup issue. We built a script around the Elastio CLI that creates a Kafka consumer with a unique consumer group id and streams a Kafka topic to the Elastio vault. The data is encrypted, deduped, and cataloged as a recovery point for future use.

The script works as follows:

- It captures the first message offset in the stream and the last one and stores these offsets as recovery point tags.

- It is agnostic to the message structure and captures the RAW message from the topic.

- On the next backup of the same topic in the MSK cluster, it gets the last message offset from the previous backup from recovery point tags and starts a new message stream from that offset. In this way, it ensures that there is no duplicated Kafka message backed up. This is crucial when discussing the restoration of the topic messages in case of any Kafka outage. It can take the specified recovery point of the topic in the MSK cluster and produce the messages collected in that recovery point into the topic in the same order initially stored in the cluster. The code for the consumer and producer is here.

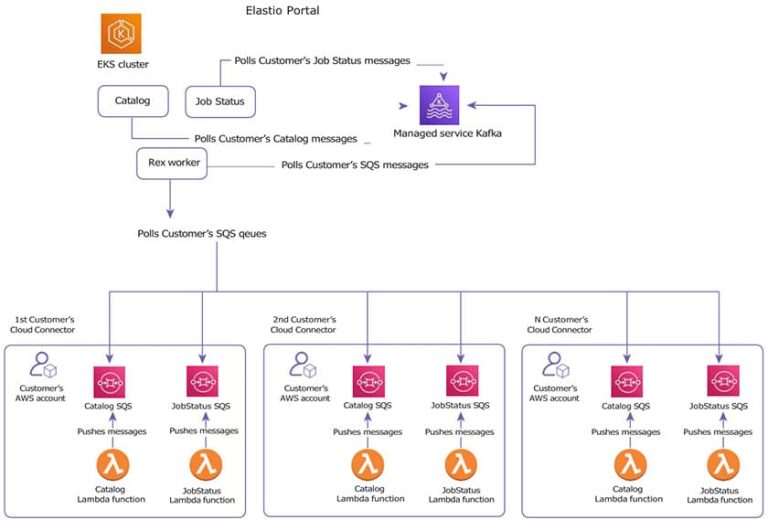

Next we wanted to embed Elastio into the CloudOps workflow to protect Kafka. We include the Elastio service and cloud connector in our Tenant infrastructure. Then we wrapped the script into a Docker image and served the image to an ECS cluster to schedule regular Kafka topics’ backups.

Here is how that works in the Tenant now:

About Elastio

Elastio detects and precisely identifies ransomware in your data and assures rapid post-attack recovery. Our data resilience platform protects against cyber attacks when traditional cloud security measures fail.

Elastio’s agentless deep file inspection continuously monitors business-critical data to identify threats and enable quick response to compromises and infected files. Elastio provides best-in-class application protection and recovery and delivers immediate time-to-value.